I'm an assistant professor at the Language Technologies Institute in the School of Computer Science at Carnegie Mellon University, working on natural language processing.

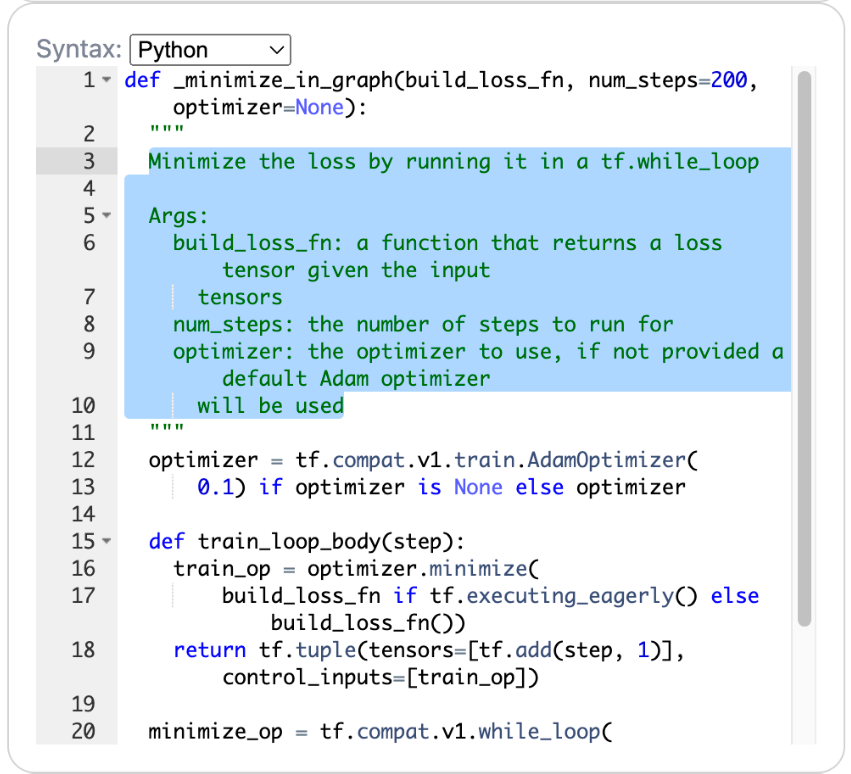

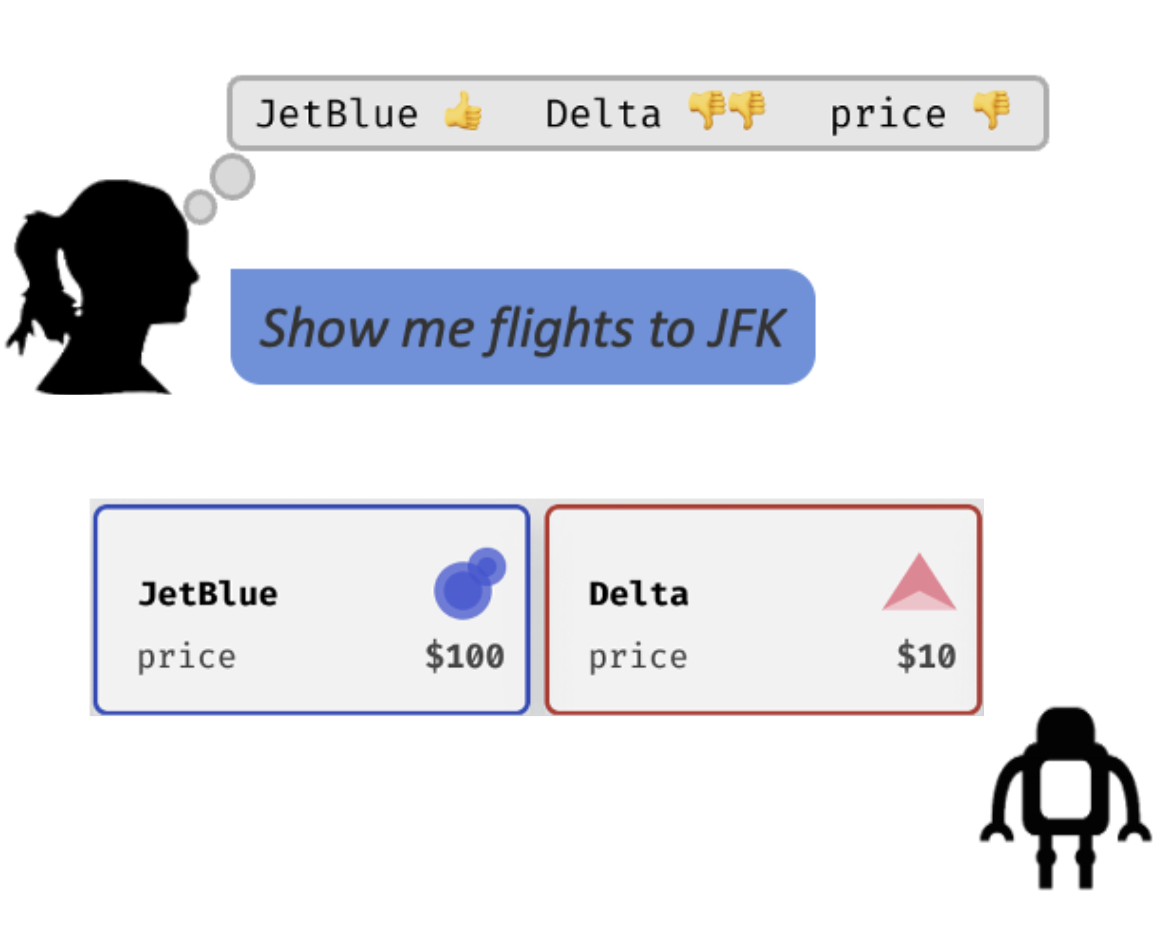

My work focuses on enabling people to use language to interact with computers to carry out useful tasks in the world. One recurring theme in my work is pragmatics: viewing language as an action that people take in context to affect their communicative partners (see our survey and position paper). I'm excited about domains where computers can complement human abilities. Recently, I've been focusing on code generation, aiming to make programming more communicative.

Before CMU, I was a postdoc at FAIR Seattle and the University of Washington. I completed a PhD at UC Berkeley in the NLP Group and the Berkeley AI Research Lab, an M.Phil. at the Cambridge Computer Laboratory and a B.S. at the University of Arizona.

Sponsorship: Our work has been supported by gifts from the Okawa Foundation, Google, Cisco Systems, and Autodesk.

- Office: Gates-Hillman Center 6509

- Email: dfried@cs.cmu.edu (note for prospective students)

If you are a CMU student interested in working with me in the Summer or Fall semester, please fill out this form.

Unfortunately, I do not have internship openings for students outside CMU at this time.

Links: CV • Google Scholar • Twitter