Earlier we discussed texture mapping as a method for adding surface detail. There are really 3 different ways to approach surface detail, and we now have the language to talk about them. The methods are:

First of all, recall that for texture mapping, points on a surface are assigned texture coordinates (s, t). The way these coordinates are assigned depends on the surface. e.g. flat surfaces in Renderman may be assigned the local (x,y) coordinates. A spline surface will normally inherit its s,t coordinates from its parameter space. There are several possibilities for spheres and cylinders.

The s,t coordinates of a surface point specify which point in an image file should be used for the color of that point. Now we can be precise about what the color of the point means (namely all 3 color components of Kd). The texture color for a point in Renderman is returned by a procedural shader. Rather than always using a texture image, Renderman uses a general-purpose programming language called the shading language to describe surface shading. Obviously, programs are much more general than images, and have proven to be very versatile in Renderman. They can be given parameters and customized to the application, and they can use randomization to generate unique patterns each time they are called.

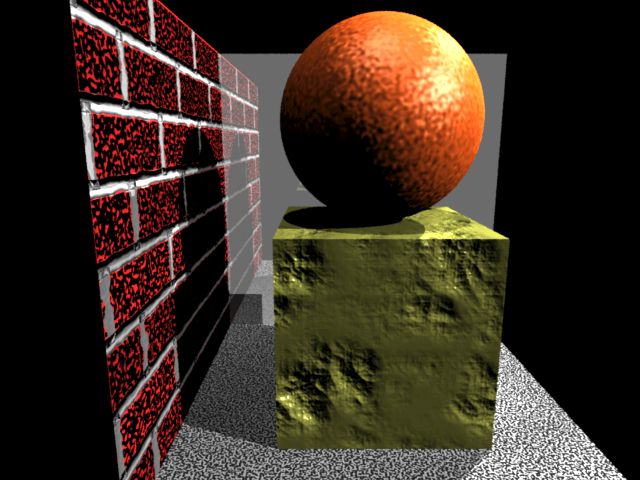

Here is an environment with 3 different texture-mapped surfaces.

The source code is here. This environment has two procedural shaders for the carpet and brick, and one image-based shader for the other wall. The image-based shader is here. Texture maps in Renderman are a special format. This image was generated by BMRT which accepts .tif files. The procedural brick shader is here.

Texture mapping is useful, but many physical objects have surface texture which involves shape fluctuation, i.e. they are rough rather than smooth. It is quite expensive to model all this geometric detail, but there is a shortcut. Recall that the light scattered from a diffuse surface depends only on the dot product of surface normal and the light source direction. So its not so much the actual surface displacement that's important (although that is for shadows), but the normal variation.

Bump mapping is a technique for varying the surface normal as though there were actual height fluctuations of the surface. But the surface remains flat. Specifically, suppose you had a height pattern on a surface in the X-Y plane. Say the height was H(X,Y). Without displacing the surface, you can compute the normal at a point as (dH/dX, dH/dY, 1).

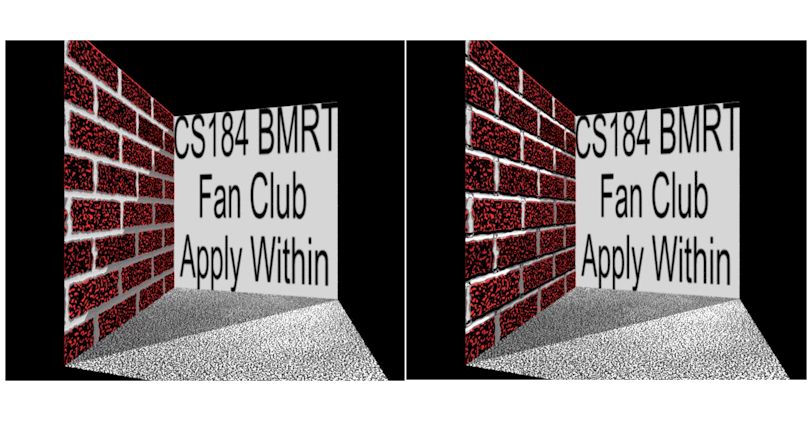

The effect can be quite compelling. Here is a side-by-side comparison of the brick texture with and without bump mapping:

Notice how the shading of upward-facing and downward-facing mortar gives a very convincing look to the image on the right. A great amount of versatility is possible with bump mapping. All surfaces in the image below were bump-mapped:

Here is the source code for this image.

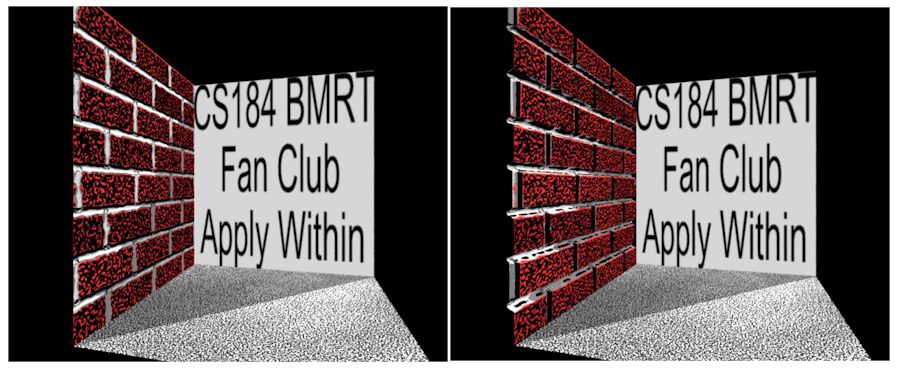

Bump mapping does a good job for small displacements. But because the normals are "out of sync" with the actual surface shape, some anomalies can happen. Also, a bump-mapped surface will not cast shadows correctly, because the surface is actually flat. Renderman supports displacement mapping, where surface points can actually be moved normal to the surface. The result adds one more level of realism. Here is a side-by-side of bump-mapped and displacement-mapped brick walls:

A close-up of the right-hand image shows that the recesses in the mortar correctly receive the shadows from the other surfaces.