|

University of California, Berkeley EECS Dept, CS Division |

||

| Jordan Smith |

SLIDE: Scene Language for Interactive Dynamic Environments |

Prof. Carlo H. Séquin |

| Home | Goals | Publications | People | Gallery | Assignments | Distributions |

The Scene Language for Interactive Dynamic Environments (SLIDE) is an educational rendering system for 3D interactive dynamic environments. The SLIDE system is comprised of three parts: a scene description language, a set of programming laboratories which build a software rendering system, and an OpenGL accelerated SLIDE rendering program. The SLIDE system has been used to teach CS184 during the Spring semesters 1998 and 1999.

The Scene Language for Interactive Dynamic Environments, SLIDE [slide], is a new software system designed to teach undergraduates about 3D computer graphics. The field of 3D Computer Graphics has been rapidly changing and maturing. At one time, 3D graphics applications required special high end machines to run, but today faster PC's with cheap high performance 3D graphics cards make 3D graphics available to a wider range of users. The creation of standard programming API's, such as OpenGL [opengl], and standard scene description languages, like RIB [renderman] and VRML [vrml], have also helped to advance the field. These standards have enabled the creation of software like VRML browsers which make it easier for novice users to experiment with 3D Computer Graphics.

These changes in the field force a reevaluation of how to teach an undergraduate course in 3D Computer Graphics. The fundamental theories behind 3D Computer Graphics have not changed that much over the years. In fact, many of the concepts in OpenGL can be traced back to ideas presented by Sutherland 30 years ago. Educators can agree on a general list of important topics such as the following: object modeling, scene hierarchy, modeling transformations, projections, visibility, clipping, scan conversion, lighting, surface detail, photo-realistic rendering, animation, and user interfaces. The open issue is how best to use the new graphics technology to teach undergraduates these core concepts. The major decision faced by any instructor of a graphics course is to choose the appropriate level of abstraction for their students to work at.

The needs of the course depend on the target audience. A vocationally oriented course might use existing graphics languages and applications off the shelf. Students would practice the concepts of computer graphics by exploring the functionality of these utilities. A benefit of this approach is that familiarity with packages which are used in industry would make students highly "marketable" apon graduation. However students might not get a deep understanding of the founding theories with this approach. At the other extreme, a course targeting programmers who might build the next generation of graphics processors or API's might forego the use any existing utilities and build several graphics algorithms from scratch. The goal here is to give students an in-depth understanding of the founding principles. With this knowledge, it should be easy for them to learn to use commercial packages on their own. Perhaps the most appropriate goal is a compromise between the two. It would be at a low enough level to reinforce the principles of computer graphics but would rely on available technology to limit the amount of irrelevant work that the students must do to implement graphics algorithms.

The goal of the SLIDE system is to educate individuals who are interested in the theory and application of computer graphics. A course on this subject should have multiple approaches for conveying and practicing such material. Students learn the theory from lectures, text books, and on line documentation. Written assignments reinforce the theory behind concepts and give a high level understanding of how different algorithms work. A draw back to written assignments is that the feed backloop is usually not very tight, typically on the order of days or weeks. In addition to written work, students use existing utilities to explore concepts interactively. Lessons such as this could be done on multiple levels. Students could use or adjust parameters in existing programs. Students might make scenes using a 3D modeling program. Or there might be Java applets made which students can interactively adjust parameters on to see the effects of different algorithms. On a lower level, students might do projects where they use existing API's, such as VRML or OpenGL, to create graphics applications which exercise their knowledge. But even this approach only uses the theoretic algorithms as a black box. It is possible for a student to finish such an assignment without having a working knowledge of the algorithms they are implicitly invoking. Assignments in which students implement graphics algorithms force them to understand the concepts well enough to instruct the computer how to perform them. While programming the students obtain instant feed back from the computer whether their algorithms work. Successful completion of such an exercise will definitely leave the students with a working knowledge of the algorithms involved. However, programming assignments that are not well structured can waste students' time with tasks that are not instructive about the algorithms of computer graphics. To maximize the amount of productive programming that the students are doing, it is important to provide a software framework that will steer students towards successful solutions to problems. At the same time it is important not to provide too much structure or else the students will just fill in the blanks rather than exercise their minds.

The SLIDE system is designed to teach computer graphics using many of these techniques. The on-line SLIDE documentation gives insight into graphics theory and algorithms, but it is only designed to augment lecture and text book information. The SLIDE language provides students with an interface to 3D rendering which is easy to learn and is powerful enough to describe sophisticated hierarchical dynamic scenes. It is similar in form and function to standard languages like VRML, Open Inventor, and RIB. The structure of the scene elements is compatible with rendering API's such as Open Inventor, OpenGL, and Java3D. Using the SLIDE language alone, it is very easy to create interactive demos that teach different concepts of computer graphics. Students can explore concepts such as boundary representations, hierarchical modeling, or geometric transformations.

In addition, there is the SLIDE rendering program, similar to a VRML browser, which is what makes the SLIDE language a useful tool. The SLIDE renderer is programmed in a hierarchical style using C++. The program architecture is similar to the Open Inventor and Java3D API's. The SLIDE language and renderer do not provide a framework for implementing low level graphics algorithms though. For this reason, the SLIDE rendering pipeline programming laboratories were created. There are eight laboratories that incrementally build up a software rendering pipeline which is similar in function to the Open Inventor, OpenGL, and Java3D API's. The SLIDE laboratories are even more similar to Mesa 3D, a software implementation of OpenGL, in the fact they allow the students to get "under the hood" of these API's and work with the source code that implements the algorithms. The SLIDE labs are not designed to be a compatible replacement for OpenGL like Mesa 3D though. In fact, the labs are programmed on top of the OpenGL API. The SLIDE labs are designed to let students implement graphics algorithms with a minimum of programming setup and grunt work. Students build the complete SLIDE renderer, a module at a time, inside skeleton code designed to lead them in the right direction. Students get hands-on experience learning how the full rendering pipeline works in reasonably sized chunks. The SLIDE model of laboratories gives a cohesive view of the rendering pipeline as opposed to a scheme which consists of doing small unrelated assignments. The SLIDE software pipeline teaches students the theory and application of graphics concepts as well as sound lessons in software engineering.

Section 2 of this paper provides background about existing graphics API's. Their influences on SLIDE as well as their shortcomings will be discussed. Sections 3-6 present an in-depth description of the SLIDE system including the scene language, the renderer program, and the programming laboratories. Section 7 contains a discussion of the experiences of students who have used SLIDE to learn about computer graphics. Section 8 concludes with a discussion about future directions for SLIDE.

SLIDE has been influenced by many existing systems and languages. The SLIDE scene description language borrows ideas from GLIDE [glide], RenderMan [renderman], OpenGL [opengl], Open Inventor [inventor], and VRML [vrml]. SLIDE uses Tcl/Tk [] for its scripting language as well as for creating graphical user interfaces (GUI). The SLIDE viewer's architecture has been highly influenced by the structure of OpenGL and Tcl/Tk. The SLIDE instructional laboratories were inspired by the Nachos [nachos] Operating System. To understand the design decisions made in SLIDE it is important to understand where its different components originated from.

SLIDE is the most recent in a line of scene description languages developed at U.C. Berkeley for the purpose of teaching the introductory undergraduate computer graphics course, CS184: "Foundations of Computer Graphics." The idea of these languages has been to provide the class with an outline for a simple scene description language so that the students could implement a rendering system for it in a single semester. The evolution of these languages has been as follows:

The only one of these languages I am familiar with and will discuss is GLIDE as the others hand been retired before my time. The GLIDE language added ideas about dynamics from the BUMP [bump] system, a animation utility built on top of Berkeley UniGrafix [unigrafix], into the scene language SDL. GLIDE was the language used in CS184 in the Spring of 1994 when I was a student in the class.

The GLIDE scene and object modeling hierarchy was very simple and reasonably powerful. The language was a human readable ASCII format that made it possible to model objects using a text editor. GLIDE described the shape of objects in the form of a boundary representation. To make it easier for students to implement a viewer for it, the only primitives in GLIDE were polygons and polyhedrons. The modeling methodology was bottom up. A face was defined by a list of points which made up its contour. Simple objects were created with a set of faces. More complicated objects could be created using the group and instance mechanisms to build up a scene graph. A group node consisted of a list of instances of other groups or objects. Instances could contain geometric transformations to translate, rotate, or scale their children. Using this simple language and a text editor, students could quickly model small interesting scenes. SLIDE has retained GLIDE's language principles and geometry modeling hierarchy with little modification.

GLIDE also had many failings which made it a cumbersome tool for building interesting dynamic scenes or for learning computer graphics. The most disappointing feature of GLIDE was that the rendering program had to be specially recompiled for each dynamic scene. In effect, this meant that GLIDE was not a general purpose rendering system, instead it was really a 3D application building API. The recompiling aspect created slow user feed back when modeling and modifying dynamic scenes.

The GLIDE light and camera objects were not very convenient for creating walk-through applications. Lights and cameras where not treated like other scene primitives. All of the geometry to place cameras or lights was built directly into these constructs, and they could not be hierarchically modeled into the scene. Instead they were treated only at the global level of the scene to make it more convenient for defining which camera and lights would be used for each rendering. This made it difficult to create scenes that included cars with headlights and drivers. Ideally one would model the car with two headlights attached to it and a driver nested inside the car with a virtual camera located at his head. Then as the car was transformed through the scene, the headlights and camera would move with it. To accomplish this in GLIDE, unfortunately, it was necessary to create functions to correctly flatten the car's hierarchical transformations and to create the necessary parameters to place the lights and camera in world root's coordinate system.

The GLIDE lights and cameras had another unfortunate drawback. By including location information inside these constructs it failed separate the concepts of modeling transformations from the computations which must be computed within a single coordinate frame. In the case of cameras, the GLIDE model failed to separate the idea of the view orientation matrix from the idea of camera projection. The GLIDE camera model closely followed the PHIGS model for cameras which was a mistake because of the confusion it causes for students. In the case of lights, the GLIDE model failed to separate the concept of placing a light in the world from computing the lighting equation for a point within a common coordinate system.

Another drawback to the GLIDE instructional system was how the programming laboratories were structured. The GLIDE rendering pipeline programming assignments were not very student friendly. At the beginning of the semester, students were given code to parse GLIDE description files into a C struct based data sturcture. The base code would also reevaluate the dynamic expressions on each frame, though it would not do anything to keep the data structure consistent. This was appropriate because CS184 is a graphics class not a compilers class, but it still put a large burden on the students to build a rendering system from scratch. Building a rendering pipeline in a single semester is a daunting task even if you know a lot about 3D rendering. For students in the class it was even harder because they were learning about rendering for the first time. This situation made it difficult for students to structure their programs such that they were modular enough to handle new functionality needed for later labs. At some point in the semester, most groups would find that they were at the of the scope of their code, at which point there was no choice but to scrap some or all of the work they had done and to start over. An error such as this can be a valuable learning experience in software engineering, but it clearly distracts from learning the concepts of the course for which the project was intended. The SLIDE educational system improves on GLIDE's laboratory framework in the hope of making the students' experiences more positive.

The SLIDE system addresses these shortcomings of GLIDE in order to produce a better educational graphics language and application framework. SLIDE addresses the difficulties of students in the programming laboratories by providing a set of well structured laboratories containing C++ skeleton code. This format helps to guide students to learning good solutions to the problems of rendering. This style of assignments takes away some of the creative freedom of the students, but it prevents students from reenforcing themselves with incorrect and inefficient practices. This idea of providing a structured coding framework in which the students create different modules of a cohesive system is modeled after the Nachos instructional laboratories used in teaching operating systems courses.

SLIDE's framework for laboratory assignments was inspired by Nachos [nachos], "Not Another Completely Heuristic Operating System." Nachos is a successful educational system for teaching an introductory computer operating systems class. The Nachos model for programming laboratories is to provide students with a description of an assignment along with some well structured skeleton code which they must understand, modify, and complete. There are many benefits to this approach, as opposed to giving pure specifications and having the students work from scratch.

The structure of the Nachos assignments leaves little room for confusion by the students. The assignments are documented with a written set of tasks and goals. And if students find these descriptions confusing or ambiguous, there is a way for the students to disambiguate the details on their own. Nachos provides compiled executable versions of the solutions to the assignments from the start. Before starting their own coding of an assignment, students can run tests through the solution executable to verify that they understand the concepts. Running test cases through a working example should also aid students in designing the architecture of their own code. While modifying their own code, students can debug and verify their work by running examples through the solution executable and through their own, comparing the results. This makes it easier for students to verify that they have completed the work in a satisfactory manner before submitting it.

The Nachos laboratories start the students off with a skeleton project which compiles and runs but does not necessarily do very much. This is helpful because many students are not familiar enough with the user environment to successfully create a project. Many students will have had little or no experience creating and managing makefiles. This framework gets students up and working faster on topics relevant to the course, and in addition it frees the instructors from answering numerous account- and system-related questions early in the course.

The structure of a program is established during its infancy and has a large effect over its managability down the road. In Computer Science courses, students learn the theory behind a system, and at the same time are expected to implement part or all of such a system in laboratories. Given that the students are naive as to the ultimate form of such a system at the beginning of a semester, it is unrealistic to expect them to create from scratch a solid framework that will last them through the entire semester. Inevitably at some point in the middle of the semester students will find that their solutions are not modular enough to handle the growing complexity of the system.

If the course is structured in such a way that students are doing many small unrelated assignments, then this is not as important an attribute for the laboratories. The experience of working on an integrated system over the course of a semester is more rewarding for students and prepares them better for the "real world" of programming. Once students become familiar with a framework, it takes them less start up time to begin new assignments. Then they spend more time learning the material which the labs are suppose to be reinforcing and less time scrambling to get anything at all running. There is also a valuable lesson in software engineering to be gained by steadily building up a complex system. In the end, students who complete the full system are rewarded with a greater sense of accomplishment than they would have experience on any single assignment.

OpenGL has had three main influences on SLIDE. First, the full SLIDE rendering program is built on top of OpenGL's C language API. This programmed was designed using OpenGL because it is the industry standard for building fast 3D rendering applications. It is supported on most OS's which makes SLIDE portable. Also most hardware 3D accelerator cards support the OpenGL API which allows SLIDE run in real time on more complicated scenes.

OpenGL also strongly influenced the structure of the software rendering pipeline that the students implement. This pipeline can be thought of as a subset of the OpenGL with a different programming interface. The OpenGL API consists of a global graphics state which can be configured during a rendering pass. Then geometric primitives are sent to it and rendered using the current attribute states. The SLIDE software implementation differs from OpenGL in the fact that it is a graph based rendering interface. SLIDE uses the C call stack to maintain state instead of a global state machine. This is easier to implement for the students because the stack data structures are implicit in the C programming language. Aside from this difference, SLIDE performs almost exactly the same operations as OpenGL.

Lastly, OpenGL has had an influence on the SLIDE language. Significant examples are the SLIDE light and camera paths in the SLIDE render statement. The idea for these paths centers around the fact that OpenGL needs the camera and light positions defined before any geometry can be rendered. The OpenGL name stack which is used for picking operations was the model for the SLIDE paths. In addition, after the basic SLIDE system was completed, OpenGL was used as a guide for adding on extra features. OpenGL fog and stencils are two examples of OpenGL inspired extensions.

OpenGL could be an effective interface for teaching some aspects of 3D rendering. OpenGL would be useful for labs where the students implement hierarchical models and scene graphs. On the other hand, it would be hard to use it directly to teach labs on the rendering algorithms that make up its rendering pipeline. The students would only be able to get a user's perspective of the API which would not reenforce the details of those algorithms. It would be very hard to substitute a single module of the OpenGL pipeline with a student's code without having a complete software implementation of OpenGL available. However, the full implementation of OpenGL is more complicated than is desirable for teaching students in a one semester course.

Mesa 3D [mesa] is an all-software implementation of the OpenGL API and state machine. Similar to SLIDE, Mesa 3D is an open source implementation of an OpenGL software pipeline. This similarity implies that Mesa 3D could be used for instructional purposes, but this is not its intended use. Mesa 3D was created to be a software OpenGL alternative for non-SGI machines. Mesa 3D is instructive only by reading through its source code and figuring out how things were implemented.

SLIDE is not designed to be an implementation of the OpenGL API. SLIDE's interface is through text files which describe scene hierarchies instead of a low-level graphics programming API. SLIDE implements only a small subset of the OpenGL API. The reason for this limitation is too keep SLIDE small and simple enough for students to implement a renderer for it in a single semester. SLIDE is designed with teaching in mind, and the source code has been created so that students can implement modules within it easily. In fact, the SLIDE laboratories are actually built on top of OpenGL. Through the course of the semester the students implement more modules to make their software pipeline less dependent on OpenGL utilities.

Tcl and Tk combine to form a powerful utility for creating applications with Graphical User Interfaces (GUI). The Tool Command Language, Tcl, is a string based scripting language. In Tcl, any type of data can be represented as a string. Tcl is useful for many reasons. Tcl itself is a complete programming language. The SLIDE viewer embeds a Tcl interpreter which allows SLIDE to use Tcl as the scripting language for dynamic scenes. The Tcl language is a standard interface to Tk. Tk is a widget tool kit for creating GUI's. Tk has been implemented on many operating systems, which makes it a system- independent windowing API. Tk is very powerful, and it is much easier to use than a C or C++ windowing API. Tcl's interface to Tk was the largest motivating factor for using Tcl for SLIDE.

Tcl is also useful for gluing different components of an application together. Tcl can be used to glue C code to GUI's or other C code. Tcl has a convenient interface to C. A program can make Tcl calls from C, or it can make C calls from Tcl. An application can export some of its C functions to Tcl through a binding mechanism. Tcl bindings to C functions in applications may also be created by dynamically loading C shared library binaries that create function and variable exports in their initialization. With this functionality it is possible to create programs by gluing together many individual components. The SLIDE system has just begun to explore the possibilities that the Tcl framework provides.

RenderMan [renderman] is a standard scene description language for photo-realistic renderers. RenderMan has no way of describing dynamics because its intended use is for batch style rendering whereas SLIDE is intended for real-time interactive rendering. SLIDE uses simplified rendering assumptions in its algorithms that limit the realism that can be accomplished with it. As a mechanism to explore photo-realistic rendering, the SLIDE renderer has a RenderMan output module which will translate the current state of a SLIDE scene into the RenderMan format. This can then be used to make high quality stills of SLIDE objects or to output a sequence of frames to make a high quality animation. The SLIDE language and renderer can be used in this way as an animation modeler and fast previewer for photo-realistic images and movies. It gives the students a chance to experiment with ray tracing and radiosity without implementing these algorithms and without having to learn a new scene description language.

The interaction with RenderMan has had an effect on the SLIDE language. Curved surface primitives have been added as extensions on top of the basic SLIDE language. By not having to tesselate these primitives to make them valid input for SLIDE, it makes it easier to create much more visually pleasing images with RenderMan. Another extension to the SLIDE language was a way of describing an area light source. The OpenGL SLIDE renderer has no way of implementing such a primitive in real time, but by adding it to the language it makes it much easier to use SLIDE as a modeling and previewing system for RenderMan.

There are many aspects of RenderMan that make it an undesireable language for teaching computer graphics. The full RenderMan interface is too complicated for students to implement in a single semester. It is not an efficient way of describing animations. The only way to create an animation in RenderMan is to describe each frame of the animation separately. Some geometry can be shared between frames, but any part of the scene that contains anything dynamic must be repeated in every frame. Another problem with RenderMan is that the feedback to the user is slow. Even when using the OpenGL based previewing renderer instead of the photo-realistic renderer, there is no mechanism for interactively adjusting parameters on your model which would be very useful for many things. The last point about RenderMan is a personal bias. The RenderMan coordinate systems are left-handed by default. This seems like a poor choice for education because the standard practice in all mathematics classes now is to use right handed coordinate systems. It seems silly to add this extra confusion to a domain that already has enough comceptual difficulties for most students.

Open Inventor [inventor] is a C++ class hierarchy API for modeling scenes and rendering them using OpenGL. Open Inventor is very comparable to the C++ class hierarchy which is used in the SLIDE renderer and the SLIDE software pipeline laboratories. Open Inventor was originally designed as a programming API, but later a file format was created for it which was the defacto standard for exchanging scene data until VRML was created. Open Inventor has a wide variety of scene node types. It also has some nice mechanisms for dealing with user input and dynamics. For the most part, if there is some kind of functionality that you desire, Open Inventor probably has a ready made primitive that does pretty close to what you want. SLIDE takes a more utilitarian stance. SLIDE has a few simple and powerful mechanisms with which a user can do almost anything. This puts more burden on the scene creator, but it keeps the scope of the SLIDE renderer smaller which makes it a better candidate for implementation by students in a semester course.

Open Inventer's scene graph node hierarchy has a few peculiarities. Cameras' and lights' effects depend upon their topological location within the scene graph hierarchy. The rendering begins at a root node, but nothing is rendered until a camera is encountered in its depth first search. Then, unless other wise specified, the rendering traversal continues and all nodes and every node encountered after the camera are then rendered. There is also a mechanism in which the camera can set a new root node to begin the geometry rendering from. This scheme is both confusing and inefficient to implement. It demands that the user be aware of how the ordering of children nodes within a group will affect the final rendering. It is inefficient because for every rendering pass, a depth first search must be run first to find where the viewpoint is located. Lights have similar idiosyncratic behavior. Once a light node is traversed in the depth first rendering pass, it is turned on for all nodes that follow it in this traversal. Again the position in the hierarchy has bizarre effects on the resulting image. SLIDE has a cleaner model for dealing with these issues.

The Virtual Reality Modeling Language, VRML [vrml], is the standard for modeling 3D dynamic scenes on the Web. VRML is comparable to the SLIDE language. The VRML standard is based on Open Inventor's file format. VRML added constructs to handle http linking over the internet. VRML browsers are comparable to the SLIDE renderer though they have more built in facilities for navigating a scene. VRML was designed for describing 3D dynamic worlds and navigating through them. The VRML has many nice built in features to facilitate such activities. Much like Open Inventor, the set of necessary primitives for a VRML browser is much greater than the requirements for a SLIDE renderer. SLIDE does not provide as many off the shelf utilities, but with a little ingenuity scene creators can use SLIDE to make anything they could make with VRML. The scope of SLIDE makes building a renderer for it easier.

VRML attemps to solve some of Open Inventor's scene hierarchy idiosyncracies, but it still falls a little bit short. VRML removed camera descriptions and replaced them with viewpoints. It leaves it up to the browser to define the camera frustum. There is then a mechanism for choosing which viewport to view the scene from. So it is impossible to express view frustum information which is necessary for describing projections using pure VRML. VRML lights are very similar to Open Inventor lights. The VRML change is that directional lights only illuminate sibling nodes.

Java 3D [java3d] is similar to Open Inventor or the SLIDE class hierarchy. Java 3D is a scene graph API implemented as Java classes. Java 3D had not yet beeen released when the SLIDE system was started in 1998. Future implementations of SLIDE may be tailored towards Java 3D instead of OpenGL.

The main goal of the SLIDE system is to provide an educational framework for the principles of 3D computer graphics. SLIDE is designed to teach about hierarchical and procedural geometric modeling, animation, real-time rendering, and photo-realistic rendering. The SLIDE language, rendering program, and a sequence of programming laboratories have been developed in order to teach a semester long upper division undergraduate course about these foundations of computer graphics.

The three major principles of the SLIDE system are learn by doing, KISS, and modularity. The idea behind SLIDE's model of teaching is that students will gain a deeper understanding of computer graphics concepts and algorithms by implementing them. With this hands-on approach, students develop a functional understanding of the subject matter, as opposed to just hearing information in lecture and then forgetting it the minute they walk out class. To keep the scope of the material reasonably sized for a semester long course, it is important to follow the KISS principle: "keep it simple, stupid." This is one of the major factors that prompted the creation of SLIDE despite the fact that there were established standards available. The existing standards were complicated enough that students would get lost in their details and would lose sight of the important concepts.

It is important to keep the SLIDE system modular for many reasons. The SLIDE language needs to be modular and extensible so that it is easy to add new constructs to it. The ability to add new constructs to the language will make it a more powerful modeling tool. The only way that the SLIDE renderer will be able to accommodate such changes in the description language is if its architecture is modular. In fact, the scene graph node abstraction of the basic SLIDE language itself forces the renderer to be modular to begin with. The all-software implementation of the renderer which is used to run the laboratories also must be very modular. The idea behind the programming assignments is that the students will build a different component of the rendering pipeline each week. These different modules must build on top of each other with every additional assignment. To make this possible, it was necessary to build a very modular solution to the entire rendering pipeline, and then remove different functionality, one module at a time, to construct the assignments for the students.

Portability of the SLIDE source code has been a major

consideration from the start. In the Spring 1998, the SLIDE

laboratory assignments were implemented by students on both SGI and HP

workstations. This was relatively straight forward because both types

machines had some form of Unix as their OS. In the Spring 1999, the

students used the same SGI work stations and new Intel machines

running Windows NT. It was a difficult task structuring the source

code and the environment to support both Unix and NT, but now

everything is set up such that the exact same source code can be

compiled on either type of machine. The reason that the SLIDE C++

source code can be cross compiled is that SLIDE only uses standard I/O

interfaces which are supported on all platforms. SLIDE uses Tcl and

Tk for its windowing API, and it uses OpenGL for its 3D rendering API.

Almost all of the remaining code depends only on ANSI C and C++

features. There are only a few places where C preprocessor directives

like #ifdef are necessary to maintain this portability.

Maintaining portability will be even more important in the future in

order to make SLIDE a utility that can be easily downloaded over the

internet and used by anyone. The goal is to make it possible for

other Universities to use SLIDE to teach their undergraduate computer

graphics courses in a similar hands-on manner. In addition,

individual users on the web may find SLIDE useful for learning about

computer graphics or as a tool for modeling and creating animations or

interactive programs.

The reason Java was not chosen to program the SLIDE system is that it was not fast enough to be used to build a real-time rendering system at the time. Java is less efficient than compiled C++ by itself, but this was not the main concern. Even more important was the fact that there was no 3D rendering hardware support available through Java. OpenGL acceleration greatly improves the performance of the SLIDE renderer over the software-only version used in the laboratory assignments. The Java 3D API may be the solution to this problem in the future, but at the time it did not exist. It will be interesting to see how well a Java 3D based renderer will perform in comparison to the existing OpenGL based renderer.

As stated above, the major goal for the SLIDE language was to keep it simple enough to be implemented by undergraduates in a single semester. At the same time, it is desireable for it to be powerful enough to describe sophisticated hierarchical dynamic scenes, so that students can apply what they have learned during the semester to build interesting interactive final projects. The scheme used to fulfill these two opposing goals has been to limit the scope of the language to a few basic primitives and mechanisms which can be combined to create more interesting tools. This means that the SLIDE language does not have as many ready made features as VRML or Open Inventor. SLIDE is designed to be more of a general programming utility which can be extremely powerful in the hands of programming-oriented users.

Both education and performance are goals for the SLIDE renderer. Two different SLIDE rendering programs have been implemented to fulfill these goals. The all-software implementation was designed as an instructional tool for teaching the internal operation of the rendering pipeline. This program is the basis for the SLIDE laboratory assignments. The other SLIDE renderer uses the hardware acceleration of the OpenGL API in order to achieve real-time rendering performance. This renderer makes the SLIDE language a useful modeling tool.

Many design constraints apply to both of these two rendering programs. They must be able to run any dynamic SLIDE scene without having to be recompiled. They should be as efficient as possible. This implies that they should have mechanisms for culling away unimportant geometry as early as possible in the rendering pass to limit the amount of work done. They should have features to help users view and debug SLIDE geometry descriptions.

The software renderer must have a very modular and clean architecture. This program is the basis of the SLIDE laboratory assignments. It must be possible to remove functional units of the rendering pipeline without destroying the functionality of the program and to create a sequence of assignments which incrementally build up the full rendering pipeline. This program must be clean and well documented because students will need to read and understand its source code.

The OpenGL accelerated renderer has different requirements. It must be extensible, so that it is easy to incorporate additional primitives into it as needed. It should be fast enough to be able to render interesting dynamic scenes in real time. It should be a useful tool for modeling geometry and animations. It should have features to aid in the creation of photo-realistic images. Though it is not designed to be a photo-realistic renderer, it is useful as a previewing tool for such scenes. It must have an output module to produce RenderMan RIB files which can then be run through a high-quality batch-style rendering program.

The purpose of the SLIDE programming laboratories is for students to get experience implementing the algorithms that make up a real-time rendering pipeline. This hands-on experience should reinforce the concepts behind the design of a 3D rendering pipeline. The goal over the course of a semester is to have students implement a complete rendering pipeline. This rendering pipeline must be somewhat simplified in comparison to a full rendering pipeline like OpenGL. It would not be reasonable to expect students to implement the full OpenGL API in a single semester.

The architecture of the laboratory assignments should break the task of implementing the rendering pipeline into smaller, more managable chunks. These assignments should incrementally build on top of each other to eventually create a complete software 3D renderer. The code that is given to students as a starting point should be well structured to lead them in the right direction. The code must be well documented and commented. The students need to understand how the program is supposed to work and which portions of it they need to implement for the assignment.

The SLIDE assignment architecture is structured such that later assignments build on top of the functionality of earlier assignments. It is then important to provide a safety net for students who fail to complete an assignment and begin to fall behind in the course. A fair way of providing this is give students new source code at the beginning of each assignment which contains the solution to the previous assignment and possibly some skeleton code as a guide for the next assignment. This scheme is equitable, and it prevents students from getting left hopelessly far behind to the point where they have no time to keep up with the currect topics of the class. The other benefit of this scheme is that students have the opportunity to work with many different partners throughout the semester. Every new assignment is a clean slate, so there is no code continuity that they are loosing by working with new people.

The SLIDE language was designed to be simple, clean, and powerful. SLIDE is intended to be used as an educational tool for teaching undergraduates about computer graphics. The SLIDE language is a simple human readable text format for describing dynamic scene hierarchies. The major lessons to be learned from the SLIDE language are geometric modeling, hierarchical modeling, and animation. Most of the SLIDE primitives have a direct mapping to a part of a 3D rendering pipeline. The core of SLIDE language limits itself to a few primitives that can still represent interesting scenes. This makes it easier for students to learn how to use it, and this also makes it possible for students to implement a rendering system for the language in a single semester. SLIDE also strives to be expressive enough to serve as an API for students to create interesting dynamic and interactive scenes for their final projects. SLIDE can represent most any scene that another scene description can, but it may take more effort from the user to do so.

To alleviate the job of the user, SLIDE has added a few extra

primitives. For instance, SLIDE's original set of 3D transformations

was the same as GLIDE's: rotations about an abitrary axis,

translations, and nonuniform scalings. These three transformations

can be combined to perform shears and other transformations, so those

extra transformation types were not explicitly part of the language.

In practice, it became clear that for placing a camera or a light in a

scene it would be nice to have a rigid body transform where the user

just describes an eye point and a target. So the lookat

transformation was then added to SLIDE.

SLIDE started out as a simple reimplementation of GLIDE. Both languages use a boundary representation (BREP) for describing geometry and geometrical instancing for creating hierarchical scenes. In SLIDE, most entities must be assigned identifiers. Other entities like instances and render statements have optional identifiers. These identifiers are used to reference entities from within other entities in order to link the scene graph together. Entity identifiers follow the C style convention.

SLIDE can dynamically change the geometry that is rendered, but the topological structure of a SLIDE scene graph is frozen after initialization. This means that all the geometry that will ever be necessary for a scene must be created as the file is being read in and initialized. This does not preclude applications that need to dynamically add or remove geometry. The user must allocate in advance all structures that could ever be added. All of the floating point value fields and flag fields of any SLIDE entity can be replaced with arbitrary expressions that will be reevaluated on each frame. This polling organization of the dynamics makes SLIDE a simpler language to implement. It also permits SLIDE renderers to classify portions of the scene graph as perpetually static. Renders can then optimize these static portions for faster rendering.

SLIDE differs from GLIDE in a number of ways. The major differences are: the camera the light descriptions, increased uniformity in the instancing mechanism for all types of nodes, the creation of the render statement, the level of detail (LOD) flags, and the use of Tcl as the dynamics language. All of these changes have made SLIDE a cleaner and more powerful tool for describing dynamic scenes.

To simplify what students are expected to build, the only core geometric primitives are planar faces and polyhedrons built from these faces. In general, a face is expected to be convex. Concave, self intersecting, and planar polygons can be represented, but they will be rendered with undefined results. In the scan conversion laboratory though, students are expected to implement a polygon scan conversion algorithm that will work on any planar face.

The SLIDE modeling paradigm is bottom up. First point entities are created to define positions in space. Then faces are created with a list of references to the points. This list of points defines the face contour in counterclockwise order. Faces can be one-sided or two-sided based on the object that is referencing them. The counterclockwise ordering defines which side of the face is the outward facing side using the right-hand rule.

Objects are then created with a set of face references. Objects can be closed polyhedrons or arbitrary groups of faces. Objects have a solid flag that defines whether its faces are one-sided or two-sided. Closed polyhedrons should be marked as solid. A closed polyhedron encloses a solid region of space. When viewed from its outside, only the external side of any face can be seen so the renderer can quickly eliminate all backfaces. Solid objects are more efficient to render because on average half of their faces can be trivially rejected in this way. Hollow objects with infinitely thin walls are sometimes convenient for modeling, too. In this case, the faces must be two-sided.

The most important aspect of SLIDE is its scene graph hierarchy. The SLIDE entities involved here are the group and the instance. These two entities make it reasonable to create and manage complicated scenes. The scene graph is a directed acyclic graph (DAG) where the nodes of the graph are objects, lights, cameras, or groups. The arcs of this DAG are the instances which can contain arbitrary geometry transformations. A simple example is a gear wheel with N teeth. First an object can be created in the shape of one of the teeth of the gear wheel. Then a group node can be created that contains N instances of the tooth rotated by different angles. This gear wheel group can then be instanced by another group to create an interlocking gear assembly.

A group is a set of instances of other nodes. A node can be an object, a camera, a light, or another group. This differs from GLIDE where only the geometric entities, objects and groups, could be instanced in the scene hierarchy. The SLIDE language describes a scene as a DAG, so it is illegal to specify instancing cycles. A group cannot instance itself or any group that has it as a decendant. Such a scene topology would lead to infinite loops.

An instance contains a reference to a node and an optional list of transformations. Instances also can have an optional identifier which is necessary for camera and light paths in the render statement. An instance statement does not make a copy of a node, but instead is a pointer to that node. This is why a SLIDE scene is a DAG instead of a simple tree. This model saves storage space and execution time. A subgraph that is instanced many times must be rendered an equal number of times, but it only has to be updated once.

The geometric transformations of an instance are listed in an order such that each additional transformation is applied from the point of view of the world coordinate system. This ordering is sometimes called a command post ordering. The idea is that the user is can be thought of as a commander who is altering the configuration from the outside. This ordering is more natural for modeling a single object.

Alternatively, the ordering of transformations from instances between nodes as the scene graph is traversed from top to bottom in a rendering pass is the opposite. Each subsequent transform is applied within the local coordinate system as it currently stands. This ordering is sometimes called the turtle walk because it is how Logo commands were ordered.

SLIDE's set of transformations comprises scaling, rotation, translation, and the convenient lookat transformation. They can be applied in any order, so any transformation that can be expressed as a concatenation of the basic ones can be applied in a single instance. The lookat transformation was added to make the placing of cameras and lights in a scene easier. In practice, it is useful for placing any kind of object. In the lookat, an eye point, a target point, and an up vector are specified. These parameters define a viewer coordinate system. The lookat transformation does a rigid body change of basis to move the base coordinate system in line with the viewer's coordinate system. This is the inverse of what is described in the PHIGS model [PHIGS]. The lookat is useful for placing cameras in the world which follow other objects. The lookat is also useful for lights and geometry. An example would be applying it to a gun so that it will track a target.

SLIDE has a hierarchical way of assigning surface, shading, and LOD attributes to nodes in the scene graph. Surface entities are used for describing the material properties of a face. A surface specifies color, lighting coefficients, reflectivity, and an optional texture map for surface detail. Any node, face, or point in the scene graph can refer to a surface entity. If a surface is not specified, then the node gets the special surface SLF_INHERIT which is a default gray. When rendering, a node decides which surface to use based on its local surface and the surface being passed down by its parent. If a node has SLF_INHERIT it will take on the surface properties of its parent, otherwise it will use the surface that is specified locally. There is no mechanism to override a locally specified surface property.

SLIDE shading flags work very similarly to surfaces. Any node or face can have one of the following shading flags: SLF_INHERIT, SLF_WIRE, SLF_FLAT, and SLF_GOURAUD. Shading values have a similar inheritance protocol to surfaces. The rendering pass starts with the default value SLF_FLAT. At each node, the node passes on its parents value if its local value is SLF_INHERIT, and the node passes on its value if it is anything else. The shading flag is finally used at the face level of the scene graph. SLF_WIRE indicates that the face should be drawn as a wire frame outline. SLF_FLAT means that the face will be filled in with a solid color. With lighting off, this color is the color of the surface that results at the face based on the rendering traversal, as described above. With lighting on, the color is calculated by performing a lighting computation at the psuedo-centroid of the face (the unweighted average of all of the face's vertices) using the face normal. Finally, SLF_GOURAUD implies smooth or Gouraud shading. Each vertex of the face gets a different color value, and these color values are linearly interpolated across the face during scan conversion. With lighting off, the vertex color is specified in the surface property resulting at the point based on the rendering traversal, as described above. With lighting on, the vertex color is calculated by performing a lighting calculation at the vertex's position using the vertex's normal. SLIDE points can be specified in the file with a normal. This is useful if the user is trying to approximate a smooth surface whose normal information is known. If the normal is not specified then the point gets a weighted average of the face normals of the faces adjacent to it, i.e. those that reference the point in their contour list.

Level of detail, LOD, flags have been newly introduced with SLIDE. They control rendering complexity. The possible values for the LOD flag are: SLF_OFF, SLF_BOUND, SLF_EDGES, or SLF_FULL. They are most useful as on/off switches for different branches of the scene hierarchy. For rendering, SLF_OFF indicates that the current node and all of its descendants are to be omitted in the current frame. They are not removed from the topology of the scene graph, but they should not be considered as part of the scene geometry for any type of geometrical calculation. SLF_BOUND means that the current node and all of its descendants should be rendered as a simple outline of their top level bounding box. This is designed to speed up rendering because none of the nodes of the subtree need to be traversed in the actual rendering pass. SLF_EDGES forces all descendant nodes to be drawn in wire frame reguardless of their shading flags. Wire frame is considered to be a lower quality of rendering, and in that sense it is a lower level of detail. SLF_FULL means to render everything as best possible. During the rendering traversal, a node determines its local LOD by taking the minimum detail of its parent's LOD and its local LOD.

The LOD flags do not imply any type of algorithm for reducing the number of triangles used to represent the object. But this and other techniques can be represented using the LOD flags. N versions of an object can be modeled with varying geometric complexity. These objects can be instanced by a single group node where all of the instances all have dynamically controlled LOD flags. This group then acts as a switch node for choosing the correct object representation. Tcl code then needs to control the LOD flags of this switch node by assigning exactly one LOD flag as SLF_FULL and all the other N-1 as SLF_OFF. This on/off mechanism of the LOD flag is useful for many things. Another example is having an object appear during the middle of an animation. In SLIDE, no nodes can be added to the topology of the scene graph dynamically. If the scene describes the nodes on start up and suppresses them with an LOD flags, the scene can later change the value of these LOD flags to make it appear as if the nodes are being dynamically added to the scene.

The SLIDE LOD mechanism of suppressing attributes of nodes lower in the scene graph hierarchy does not interact well with OpenGL's rendering model. For this reason, SLF_BOUND and SLF_EDGES are not completely supported in the hardware accelerated version of the SLIDE renderer.

SLIDE cameras define a projection in a canonical position. This is different from GLIDE where the camera also specified a coordinate system in a similar way the SLIDE lookat transformation. SLIDE makes a clean separation between the ideas of view orientation and projection. A SLIDE camera is very similar to a real camera. A SLIDE camera has parameters that describe its view frustum, this is very similar to the shape and lenses of a real camera that define its projection. In SLIDE a camera is just another type of node in the scene hierarchy, so it can be modeled into the scene in the same way that any piece of geometry is. This is similar to the real world situation where photographers position their cameras before snapping a photograph. The SLIDE camera node is a little different than other nodes in the scene hierarchy in the fact that it does not contribute any geometry to the scene because it is only a virtual camera.

The SLIDE camera has a projection flag and a view frustum that is defined by six values. The projection flag can be either SLF_PARALLEL or SLF_PERSPECTIVE. A parallel projection is where the viewer is infinitely far away from an object so it appears that all rays of light coming from the object to the viewer are parallel. In a perspective projection, the viewer is a finite distance away from the object they are viewing. Perspective projection is closer what humans experience in the real world.

The view frustum parameters define a frustum shape in the camera's canonical coordinate system. The parameters of the camera frustum are (Xmin, Ymin, Zmin) and (Xmax, Ymax, Zmax). In this canonical system, the camera's center of projection (COP) or eye is defined to be at the origin looking down the negative Z-axis. The viewing plane, which is similar to a film plane, is perpendicular to the Z-axis at Z=-1. The ranges [Xmin, Xmax] and [Ymin, Ymax] define a rectangular window on the projection plane. If this window is not centered around the Z-axis, then it defines an oblique projection. Both the Zmax and Zmin parameters must be less than zero. The Zmin and Zmax paramters define where the back and front Z clipping planes are respectively. This may seem reversed at first, but it is correct because objects that are further away have smaller Z values, i.e. larger magnitude, negative Z values.

For a perspective projection the view frustum is constructed by shooting four rays from the eye point, the origin, through the corners of the projection plane window to define a pyramid. The front and back clipping planes then truncate this pyramid. The resulting shape is the view frustum. Only objects where exist in this volume of space in the camera's coordinate system will appear in the final rendered image.

The view frustum for a parallel projection has a similar definition. In this case, a ray is shot from the origin to the center of the projection plane window. The ray defines the direction of projection (DOP). The eye point is then defined to be an infinite distance along this DOP in the positive Z direction, so all rays coming from the eye travel and infinite distance and are thus parallel. A simpler way of constructing the geometry of the view frustum is to make a second copy of the projection plane window's rectangle and to place this copy in the Z=0 plane centered around the Z-axis. Then a parallel piped shaft of space can be formed by creating four planes defined by corresponding edges of the two rectangles. Then the front and back clipping planes truncate this shaft into a parallelepiped that is the viewing frustum. Note that the front and back clipping planes are measured from the origin like in the perspective case even though conceptually the eye point is located at infinity.

SLIDE lights are very similar to SLIDE cameras. As with cameras, the positioning information of lights is separated from their definition. This is the same difference between GLIDE and SLIDE that was described in the camera description. A light has the following parameters: type, color, deaddistance, falloff, and angularfalloff. The type parameter specifies what type of light the light entity is being used as. The possible values are SLF_AMBIENT, SLF_DIRECTIONAL, SLF_POINT, and SLF_SPOT. All geometric light parameters are described in the light's local, canonical coordinate system. The light's position is defined to be at the origin. The light's primary direction is defined to be the negative Z-axis.

An ambient light is supposed to simulate the light in the world that bounces around so much that it has neither postion nor direction. An ambient light illuminates all points equally. The only pertinent parameter for an ambient light is the color field that, as with all other types of lights describes, its intensity and color.

A directional light is modeled as a point light located an infinite distance away so that all rays of that come from it to object appear to be parallel to each other. Another simplifying assumption with directional lights is that they are not affected by attenuation effects. The real world model for a directional light is the sun. A directional light shines parallel rays of light down the negative Z-axis with no attenuation.

A point light is an infinitely small point of light located at a finite point in space. A point light simulates an infinitely small light bulb. A point light radiates light from the origin equally in all directions. A point light's intensity attenuates with distance from the light. The deaddistance and falloff parameters encode this distance attenuation.

The spot light is the most complicated light type. A spot light is similar to a real world flashlight. Its bulb is located at the origin, and its main illuminating direction is down the negative Z-axis. Like the point light, the spot light's intensity is attenuated with distance. In addition to distance attenuation, spot lights also experience angular attenuation. Rays of light radiate out from the origin with varying intensities base on their direction. The ray that is coincident with the negative Z-axis is the brightest, and the intensity falls off based on the angularfalloff parameter as the ray swings away from this direction.

All of the previous SLIDE entities are defined a the virtual 3D world, where as the SLIDE window and viewport entities are defined in the 2D world of the monitor screen. Windows and viewports define the area of the monitor where the virtual 3D world described in the SLIDE scene graph will be projected to.

The window statement defines a window in the sense of GUI application. The window has a very different role than other SLIDE entities. It is responsible for interacting the windowing operating system to create an area that the renderer can draw in. The window parameters include: background, origin, size, resize, and ratio. The background field defines what the windows background color should be. Because of interactions with window managers, the background field is the only field of a window that will properly respond to dynamic values. The origin and size parameters define the initial geometry of the window when it is created, after this point it is in the hands of the window manager to alter its geometry. The origin parameter defines the position of the lower left hand corner of the window, and by adding on the size vector the upper right hand corner is defined. The origin and size parameters are defined in a device independent range of [0, 1] for all components where the X-axis points to the right and the Y-axis points up. SLIDE is device independent, so it assumes that a square in NDC maps to a square in the real world. Mapping the NDC parametric square to the entire monitor would nonuniformly scale the image because most monitors are only rectangular. To resolve this problem, SLIDE defines that the NDC square should be maximally centered on the monitor. The resize flag tells whether the window should be resizeable or not. This flag is communicated to the window manager when the window is created. The ratio flag describes whether the aspect ratio of the window should preserved while it is resizing. The possible values for the ratio flag are: SLF_FIXED, SLF_ANY, and SLF_FULL_SCREEN. SLF_FIXED states that the aspect ratio will be preserved. SLF_ANY means that window can take on any aspect ratio the user decides. SLF_FULL_SCREEN plays a duel role. First it declares that the window will be take up the full size of the screen when it is created, overriding the values in the origin and size field. This is the only way to make a window the full size of the screen on a non-square monitor. The flag then acts like SLF_ANY from this point on. The reasoning behind this default behavior is that the SLIDE file has no previous knowledge of the window's aspect ratio previously, so it must be able to handle arbitrary aspect ratios. Like the resize flag, the meaning of the ratio flag is communicated to the window manager when the window is created.

Within a window it is possible to have multiple viewports that define subrectangular drawing areas. A viewport definition refers to the window it is within and specifies an origin and a size to describe its geometry. A viewport has its lower left hand corner at the origin, and the size parameter extends it up and to the right. Unlike a window whose NDC are always square, the NDC for viewports always fills the window area, so the aspect ratio of the window will affect the aspect ratio of a viewport. The behavior for overlapping viewports within a single window is undefined. The combination of the window and the viewport define the drawing area for a particular rendering with a particular camera. As windows are interactively dragged and scaled by users, the aspect ratio of the viewport changes. The viewport mapping of the camera's frustum must take this into account. If the projected view frustum is mapped to completely cover the viewport then nonuniform scaling of the image is possible which will have bad visual effects. To deal with this problem, SLIDE defines that the projected image should maximally fit into the viewport. This means that it is scaled uniformly and centered in the viewport.

The render statement is the glue that combines the virtual world, a camera, lighting, and a viewport to describe a single rendering. The render statement can be thought of as a special root node, so it can be assigned an LOD flag. The render statement is a new invention in SLIDE over GLIDE. In SLIDE, the scene graph for a single rendering is a DAG, but the virtual world that is described in a SLIDE file can be a forest of DAG's. A render statement defines where the root of the scene is for a single rendering with a reference to a node. Any geometry nodes that are not descendants of a render statement will never be rendered. In practical terms, the render nodes are the roots of the DAG's in the forest. Note that different DAG's of the forest can share subbranches.

The render statement also defines which camera will be used to view the scene. This is a little bit tricky because there can be multiple cameras defined in the world, and each camera can be instanced multiple times. Since instancing in SLIDE does not imply copying of structures, it is necessary to have a mechanism for refering to an instance. The general name for this mechanism in SLIDE is a path. A path defines a traversal down the scene graph to a specific node. This idea was borrowed from OpenGL's name stack, and it is also similar to URL's on the Web. Paths deal only with the topology of the graph, so LOD flags will not prevent a path traversal from finding its intended node. In the render statement, a camera is specified with a camera path. A camera path is either the name of a camera or the name of a group followed by a list of instance names that tell which branch to take at each group node in the DAG and finally the name of the camera for verification. The group that begins the traversal does not have to be the root of the scene graph, although these two nodes are then considered to have the same local coordinate system. The camera path has two main benefits. It uniquely defines where to find the camera object that contains the projection information, and it defines a sequence of transformations that model the camera into the world with out having to do a full depth first search. The inverse of these transformations is the view orientation matrix which transforms the coordinate system of the root of the scene into the VRC coordinate system.

Lighting is specified similarly to the camera. A list of light paths can be specified in the render statement. A light path defines the list of transformations that model the light into the scene. Light paths can also have an optional on/off flag that can be used as a light switch.

Camera and light paths are useful for many scene modeling tasks. Cameras and lights can be modeled into the scene like any other node. This makes it easy to create moving vehicles with head lights and a camera from the point of view of the driver.

Once the virtual scene is set up with a camera and lighting, the render statement directs the resulting image to a viewport. A single viewport can have multiple rendering commands associated with it. Multiple render statements actively rendering into a single viewport yields undefined results because the visibility information is independent in each rendering. Multiple render statements can still be useful. A scene can be broken down so that different parts of the scene geometry can be rendered with different illumination. Or by making use of the render statements LOD flag, different cameras views can be set up and switched between very easily.

The final job of the render statement is for handling user input. When input events are sent to a window, it calls on all of its viewports which in turn then call on each of their render statements. Render statements will only respond to user input if their LOD not off. Each render statement manages a crystal ball interface and exports the values defined by the interface. These values include: SLF_AXIS_X, SLF_AXIS_Y, SLF_AXIS_Z, SLF_ANGLE, and SLF_SCALE. It is sometimes useful to have two render statements in the same viewport that should not share input or two render statements in separate viewports that should share input. To deal with this problem the render statements can be assigned a tcltag that modifies the names of its exported values.

The SLIDE scheme for dynamics is a polling model. Floating point values and flags can be assigned static values or dynamic expressions that will be updated on each frame. A SLIDE renderer must maintain a heart beat to signal when the each frame is to be calculated. On each frame, the SLIDE world reevaluates all of the dynamic expressions and stores the new values in the scene graph. These expressions can be arbitrary Tcl expressions. A SLIDE renderer must also export SLF_TIME and SLF_FRAME Tcl variables that can be used by SLIDE scenes to drive their dynamics. Arbitrary Tcl code can be embedded in a SLIDE file in either a tclinit or tclupdate block. tclinit blocks are run once during the initialization of a scene, while tclupdate blocks are run at the beginning of each frame update before any dynamic expressions are evaluated. Tcl was chosen as the dynamics language for SLIDE because of the availability of the Tcl run time interpreter. Tcl is not the easiest language to use syntactically, but Tcl includes many powerful features that have contributed to the success of SLIDE.

In a tclinit block, initialization code is run. This is the place to define any Tcl procedures that will be run during on each update. In addition, Tk widgets can be created here, so a SLIDE scene can build an application specific GUI for itself. This is incredibly powerful. It makes SLIDE into a 3D geometric prototyping language. Tcl also provides the ability to link complied C code to Tcl procedures. There are two benefits to this. First, scene designers can compile C code into libraries that can be dynamically linked in as the scene is loading and create fast C procedures that can be called from Tcl. This is an important feature for optimizing update time. Second, Tcl interfaces can be made to parts of a SLIDE renderer's source code. This has been used to make a mechanism to call on the SLIDE data structure builder in the parser from a tclinit block. Every SLIDE language entity has been assigned a Tcl interface that can be called from a tclinit block to create geometry which is equivalent to geometry statically defined in the file. This allows users to procedurally generate geometry using Tcl as the procedural language. Complicated fractal geometries can be stored as small parameterized Tcl functions instead of large flattened sets of polygons.

The tclupdate block is necessary for coordinating dynamic values. tclupdate blocks are evaluated on every frame in the order in which they are parsed and before any dynamic expressions are evaluated. This is useful for doing large computations and storing them into tables for faster look up for any dynamic expressions that need them.

There have been a number extensions made to the basic SLIDE language. Students are not expected to implement these extensions, but they are encouraged to use them in their final projects with the OpenGL accelerated SLIDE renderer.

The SLIDE language can be thought of as a text file interface to OpenGL. The OpenGL API has more features than SLIDE, so a common type of extension to SLIDE is add more mechanisms that can take advantage of OpenGL. Two such extensions are fog and stencil patterns. Texture mapping, which was described in the surface section, is another OpenGL extension and is not part of the instructional core.

A fog or depth cue is useful for fading distant objects into the background. In SLIDE, a fog entity has the following parameters: type, color, start, end, and density. The fog color specifies what background color will be blended with a pixel based on the distance it is away from the viewer. The fog types are SLF_LINEAR, SLF_EXP, and SLF_EXP2 all specify different blending functions. The SLF_LINEAR type implies a linear blending function which begins at the start distance from the viewer and stops with full background color at the end distance from the viewer. This linear function is not a physically based model of fog, but it is fast to compute and it gives a nice effect. The normal way to use linear fog is to place the end value on the back clipping plane and the start value some distance in front of it. Objects which move further and further away from the viewer slowly fade away until they finally disappear. Without fog, objects would pop out of existence when they reached the back clipping plane. SLF_EXP and SLF_EXP2 use a exponential functions involving the density parameter to describe the blending function. (See on-line language specification for details.)

A SLIDE fog entity is optionally referenced inside a render statement. Fogs are like ambient lights, so there is no need for a fog path. A fog reference does have an on/off flag, so that the fog can be added or removed with a switch.

Stencils provide a mechanism for creating blue screening effects. Stencils can be any pattern in general, but SLIDE only supports three predefined stencil types: SLF_BOTH, SLF_ODD, and SLF_EVEN. A stencil flag can be optionally specified inside a render statement. The default is SLF_BOTH which means that there is no stencil effect. SLF_ODD and SLF_EVEN mean that only the odd and even horizontal lines of the image will be affected by the rendering, respectively. Then two separate render statements with opposite stencils can be mapped into the same viewport. This is useful for creating stereo image effects.

The RenderMan interface has also influence the SLIDE language. SLIDE is a useful tool for modeling and previewing scenes before rendering them with a batch style, high-quality RenderMan compatible renderer. The SLIDE language is designed for real time rendering, so it constrains itself to only specify rendering features that can be implemented with interactive performance. To make SLIDE a more useful tool for modeling and previewing photo-realistic scenes many RenderMan inspired extensions have been made to SLIDE. Some of these extensions are completely ignored by SLIDE renderers, but they are stored in the data structure so that they can be included in the RenderMan output. RenderMan extensions that have been added to slide include: smooth surfaces, area light sources, and RIB strings.

The core SLIDE language only includes polygons. RenderMan nicely renders smooth, curved surfaces such as spheres, cylinders, cones, tori, bsplinepatches, and bezierpatches. To permit previewing of such primitives in the SLIDE renderer, these same primitives have been added to SLIDE as parameterized polygonal objects. SLIDE can render these objects at interactive speeds. Then when outputting RIB, the primitives can be directly translated into their RenderMan descriptions, so that the SLIDE real-time representation of the objects does not hinder their final appearance in the RenderMan rendering. Smooth surface primitives have made SLIDE a more powerful modeling tool and a convenient previewer for RenderMan scenes.

Area light sources are important for creating soft shadows. The basic light sources cast very harsh shadows with unnatural sharp boundaries. Area light sources are much more computationally expensive, which makes them poor candidates for real-time rendering. Since it is not possible to do area light sampling in real-time, the SLIDE renderer makes a crude approximation by using a single spot light to represent an area light source. This approximation portrays the fact that the area light source is giving off light, but it is not useful for previewing exactly how objects will be illuminated using RenderMan. An area light source is similar to a group in SLIDE. It has parameters to specify its color, and otherwise it is exactly like a normal group. All surfaces which are descendants of an area light source become emitters of its light color. Area light sources are referenced in render statements using arealight paths. This is analogous to the standard light sources.

When a SLIDE file is turned into a RenderMan RIB file, it is

sometimes necessary for the scene builder to specify extra RIB

commands which are not supported by SLIDE. SLIDE renderers add

comments to their RIB output to make it easier for users to correlate

between the two descriptions. With this annotation it is possible for

users to modify the RIB file by hand using a text editor to add any

extra commands. This process is very tedious, especially if the

ultimate goal is an animation. The SLIDE RIB strings provide a

general mechanism which allows scene designers to specify extra RIB

information, like shaders, in the SLIDE description. The SLIDE

renderer will completely ignore these fields when rendering, but it

will transfer them through when a RenderMan RIB file is output. There

are two types of RIB strings, ribbegin and

ribend. The ribbegin and

ribend insert their strings before and after the RIB

translation of the SLIDE entity respectively.

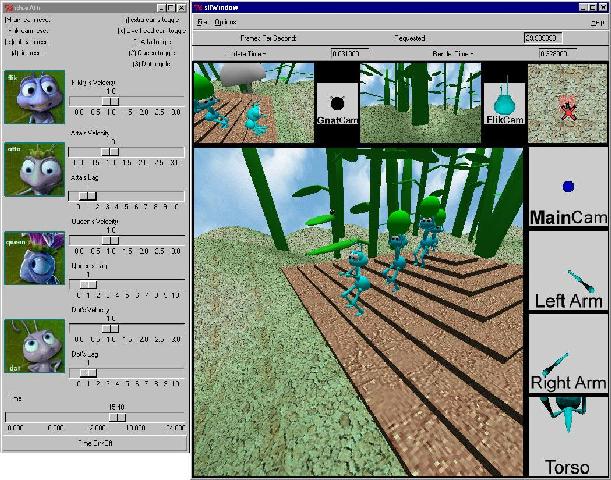

A SLIDE renderer is similar to a VRML browser. It must be able to read in any SLIDE language file and run the simulation that it describes. Two such SLIDE renderers have been implemented. These two implementations are designed to accomplish two different tasks. The first program implements the SLIDE scene hierarchy plus a software rendering pipeline. The purpose of this renderer is to teach students how the internal algorithms of an OpenGL-like rendering pipeline work. This program is the basis for the SLIDE programming laboratories. The second implemention uses the standard OpenGL API for rendering and relies on hardware acceleration to run interesting SLIDE scenes in real time.